././@PaxHeader

0000000

0000000

0000000

00000000034

00000000000

011452

0000000

0000000

28 mtime=1727720481.7657332

openai-whisper-20240930/

0000755

0001751

0000177

00000000000

00000000000

015517

00runner

docker

0000000

0000000

././@PaxHeader

0000000

0000000

0000000

00000000026

00000000000

011453

0000000

0000000

22 mtime=1727720469.0

openai-whisper-20240930/LICENSE

0000644

0001751

0000177

00000002047

00000000000

016527

00runner

docker

0000000

0000000

MIT License

Copyright (c) 2022 OpenAI

Permission is hereby granted, free of charge, to any person obtaining a copy

of this software and associated documentation files (the "Software"), to deal

in the Software without restriction, including without limitation the rights

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

copies of the Software, and to permit persons to whom the Software is

furnished to do so, subject to the following conditions:

The above copyright notice and this permission notice shall be included in all

copies or substantial portions of the Software.

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

SOFTWARE.

././@PaxHeader

0000000

0000000

0000000

00000000026

00000000000

011453

0000000

0000000

22 mtime=1727720469.0

openai-whisper-20240930/MANIFEST.in

0000644

0001751

0000177

00000000175

00000000000

017260

00runner

docker

0000000

0000000

include requirements.txt

include README.md

include LICENSE

include whisper/assets/*

include whisper/normalizers/english.json

././@PaxHeader

0000000

0000000

0000000

00000000034

00000000000

011452

0000000

0000000

28 mtime=1727720481.7657332

openai-whisper-20240930/PKG-INFO

0000644

0001751

0000177

00000022163

00000000000

016620

00runner

docker

0000000

0000000

Metadata-Version: 2.1

Name: openai-whisper

Version: 20240930

Summary: Robust Speech Recognition via Large-Scale Weak Supervision

Home-page: https://github.com/openai/whisper

Author: OpenAI

License: MIT

Description: # Whisper

[[Blog]](https://openai.com/blog/whisper)

[[Paper]](https://arxiv.org/abs/2212.04356)

[[Model card]](https://github.com/openai/whisper/blob/main/model-card.md)

[[Colab example]](https://colab.research.google.com/github/openai/whisper/blob/master/notebooks/LibriSpeech.ipynb)

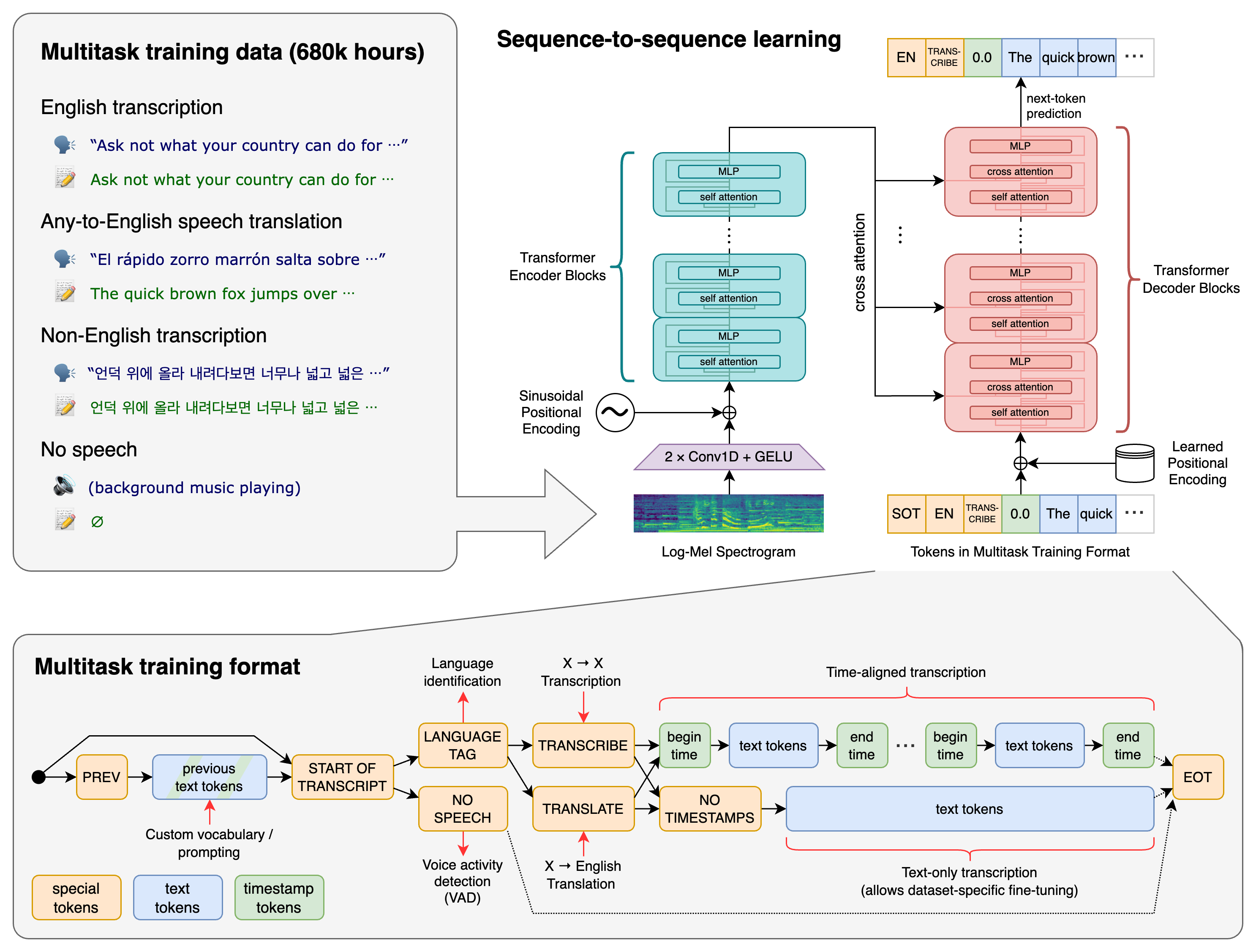

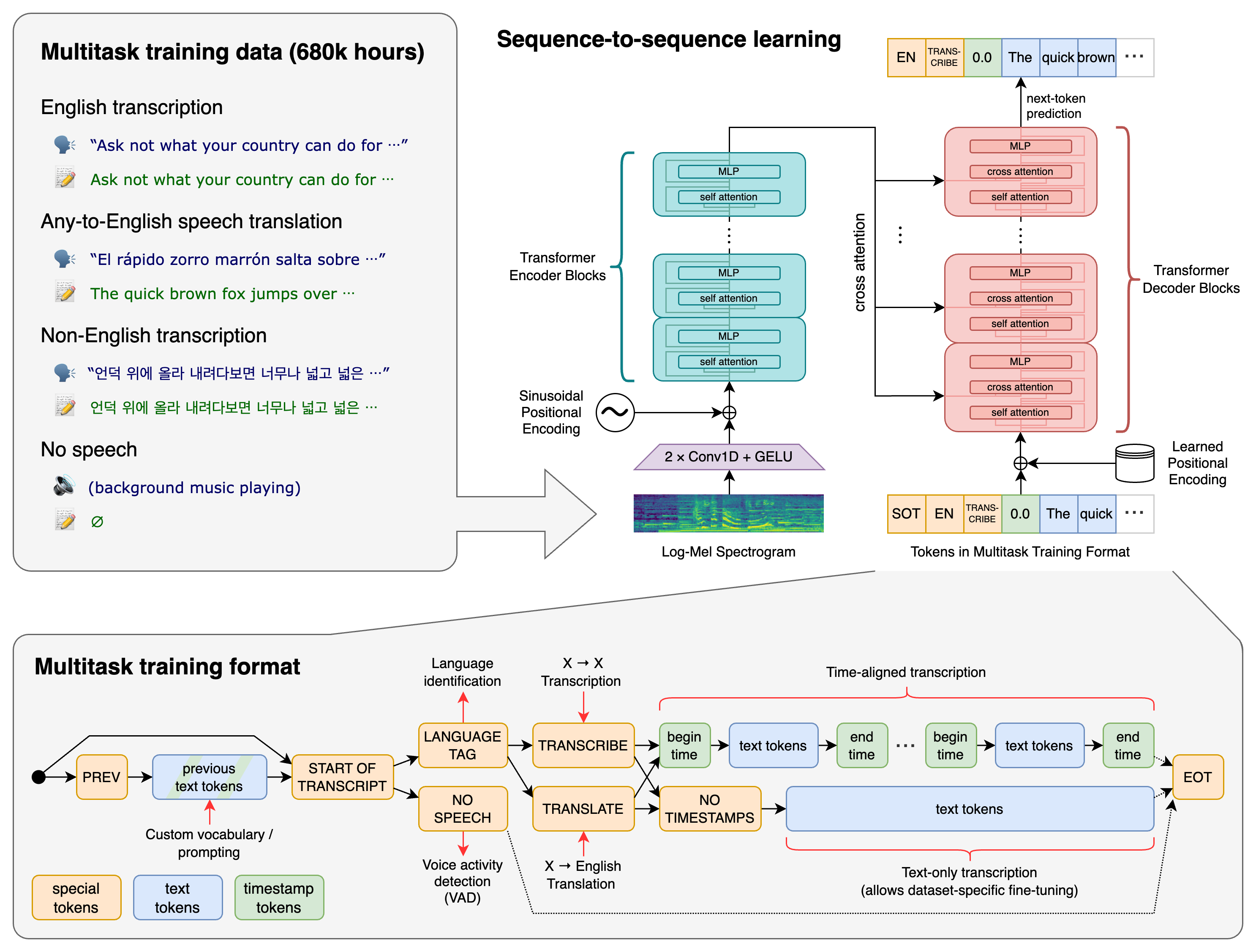

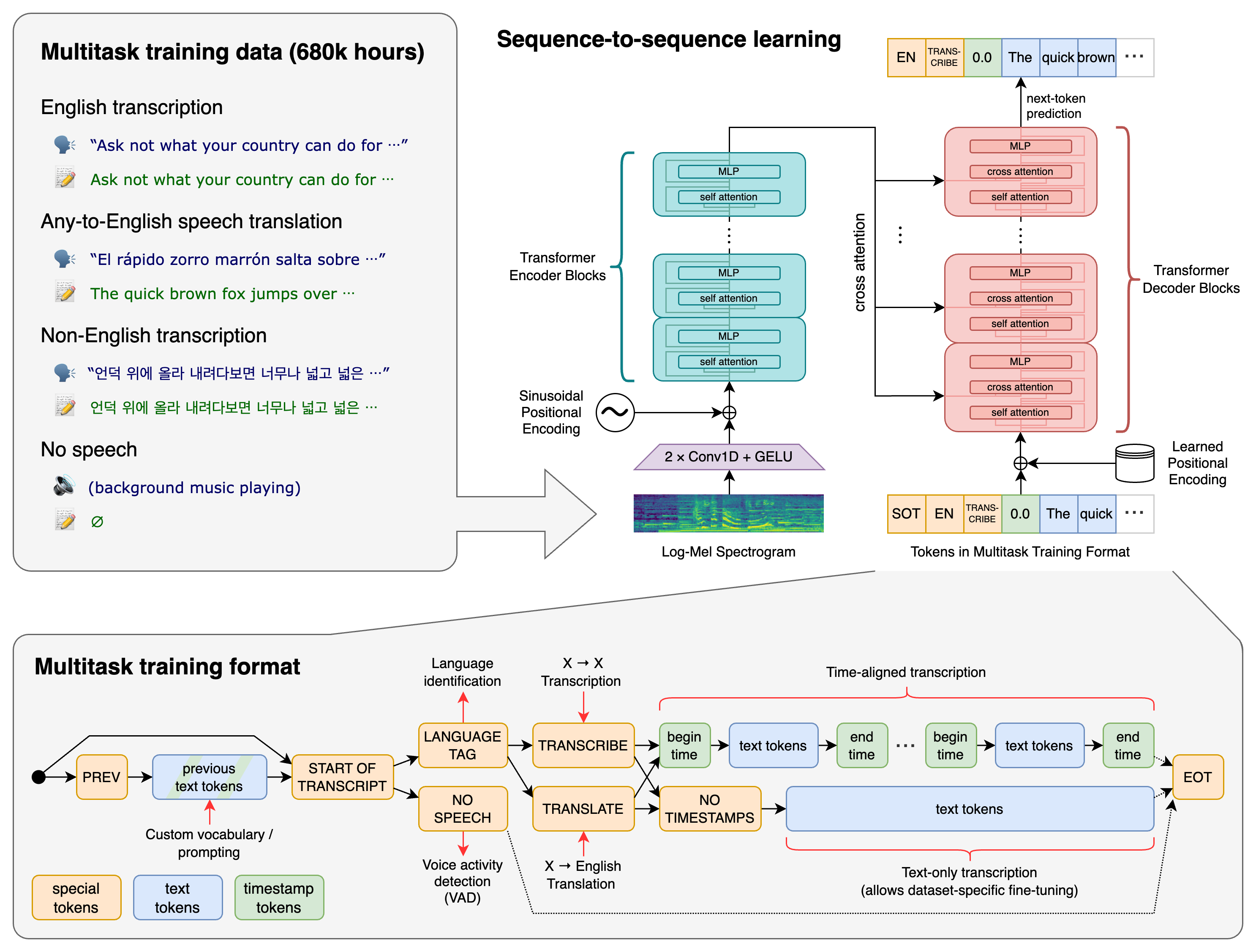

Whisper is a general-purpose speech recognition model. It is trained on a large dataset of diverse audio and is also a multitasking model that can perform multilingual speech recognition, speech translation, and language identification.

## Approach

A Transformer sequence-to-sequence model is trained on various speech processing tasks, including multilingual speech recognition, speech translation, spoken language identification, and voice activity detection. These tasks are jointly represented as a sequence of tokens to be predicted by the decoder, allowing a single model to replace many stages of a traditional speech-processing pipeline. The multitask training format uses a set of special tokens that serve as task specifiers or classification targets.

## Setup

We used Python 3.9.9 and [PyTorch](https://pytorch.org/) 1.10.1 to train and test our models, but the codebase is expected to be compatible with Python 3.8-3.11 and recent PyTorch versions. The codebase also depends on a few Python packages, most notably [OpenAI's tiktoken](https://github.com/openai/tiktoken) for their fast tokenizer implementation. You can download and install (or update to) the latest release of Whisper with the following command:

pip install -U openai-whisper

Alternatively, the following command will pull and install the latest commit from this repository, along with its Python dependencies:

pip install git+https://github.com/openai/whisper.git

To update the package to the latest version of this repository, please run:

pip install --upgrade --no-deps --force-reinstall git+https://github.com/openai/whisper.git

It also requires the command-line tool [`ffmpeg`](https://ffmpeg.org/) to be installed on your system, which is available from most package managers:

```bash

# on Ubuntu or Debian

sudo apt update && sudo apt install ffmpeg

# on Arch Linux

sudo pacman -S ffmpeg

# on MacOS using Homebrew (https://brew.sh/)

brew install ffmpeg

# on Windows using Chocolatey (https://chocolatey.org/)

choco install ffmpeg

# on Windows using Scoop (https://scoop.sh/)

scoop install ffmpeg

```

You may need [`rust`](http://rust-lang.org) installed as well, in case [tiktoken](https://github.com/openai/tiktoken) does not provide a pre-built wheel for your platform. If you see installation errors during the `pip install` command above, please follow the [Getting started page](https://www.rust-lang.org/learn/get-started) to install Rust development environment. Additionally, you may need to configure the `PATH` environment variable, e.g. `export PATH="$HOME/.cargo/bin:$PATH"`. If the installation fails with `No module named 'setuptools_rust'`, you need to install `setuptools_rust`, e.g. by running:

```bash

pip install setuptools-rust

```

## Available models and languages

There are six model sizes, four with English-only versions, offering speed and accuracy tradeoffs.

Below are the names of the available models and their approximate memory requirements and inference speed relative to the large model.

The relative speeds below are measured by transcribing English speech on a A100, and the real-world speed may vary significantly depending on many factors including the language, the speaking speed, and the available hardware.

| Size | Parameters | English-only model | Multilingual model | Required VRAM | Relative speed |

|:------:|:----------:|:------------------:|:------------------:|:-------------:|:--------------:|

| tiny | 39 M | `tiny.en` | `tiny` | ~1 GB | ~10x |

| base | 74 M | `base.en` | `base` | ~1 GB | ~7x |

| small | 244 M | `small.en` | `small` | ~2 GB | ~4x |

| medium | 769 M | `medium.en` | `medium` | ~5 GB | ~2x |

| large | 1550 M | N/A | `large` | ~10 GB | 1x |

| turbo | 809 M | N/A | `turbo` | ~6 GB | ~8x |

The `.en` models for English-only applications tend to perform better, especially for the `tiny.en` and `base.en` models. We observed that the difference becomes less significant for the `small.en` and `medium.en` models.

Additionally, the `turbo` model is an optimized version of `large-v3` that offers faster transcription speed with a minimal degradation in accuracy.

Whisper's performance varies widely depending on the language. The figure below shows a performance breakdown of `large-v3` and `large-v2` models by language, using WERs (word error rates) or CER (character error rates, shown in *Italic*) evaluated on the Common Voice 15 and Fleurs datasets. Additional WER/CER metrics corresponding to the other models and datasets can be found in Appendix D.1, D.2, and D.4 of [the paper](https://arxiv.org/abs/2212.04356), as well as the BLEU (Bilingual Evaluation Understudy) scores for translation in Appendix D.3.

## Command-line usage

The following command will transcribe speech in audio files, using the `turbo` model:

whisper audio.flac audio.mp3 audio.wav --model turbo

The default setting (which selects the `small` model) works well for transcribing English. To transcribe an audio file containing non-English speech, you can specify the language using the `--language` option:

whisper japanese.wav --language Japanese

Adding `--task translate` will translate the speech into English:

whisper japanese.wav --language Japanese --task translate

Run the following to view all available options:

whisper --help

See [tokenizer.py](https://github.com/openai/whisper/blob/main/whisper/tokenizer.py) for the list of all available languages.

## Python usage

Transcription can also be performed within Python:

```python

import whisper

model = whisper.load_model("turbo")

result = model.transcribe("audio.mp3")

print(result["text"])

```

Internally, the `transcribe()` method reads the entire file and processes the audio with a sliding 30-second window, performing autoregressive sequence-to-sequence predictions on each window.

Below is an example usage of `whisper.detect_language()` and `whisper.decode()` which provide lower-level access to the model.

```python

import whisper

model = whisper.load_model("turbo")

# load audio and pad/trim it to fit 30 seconds

audio = whisper.load_audio("audio.mp3")

audio = whisper.pad_or_trim(audio)

# make log-Mel spectrogram and move to the same device as the model

mel = whisper.log_mel_spectrogram(audio).to(model.device)

# detect the spoken language

_, probs = model.detect_language(mel)

print(f"Detected language: {max(probs, key=probs.get)}")

# decode the audio

options = whisper.DecodingOptions()

result = whisper.decode(model, mel, options)

# print the recognized text

print(result.text)

```

## More examples

Please use the [

Show and tell](https://github.com/openai/whisper/discussions/categories/show-and-tell) category in Discussions for sharing more example usages of Whisper and third-party extensions such as web demos, integrations with other tools, ports for different platforms, etc.

## License

Whisper's code and model weights are released under the MIT License. See [LICENSE](https://github.com/openai/whisper/blob/main/LICENSE) for further details.

Platform: UNKNOWN

Requires-Python: >=3.8

Description-Content-Type: text/markdown

Provides-Extra: dev

././@PaxHeader

0000000

0000000

0000000

00000000026

00000000000

011453

0000000

0000000

22 mtime=1727720469.0

openai-whisper-20240930/README.md

0000644

0001751

0000177

00000017206

00000000000

017004

00runner

docker

0000000

0000000

# Whisper

[[Blog]](https://openai.com/blog/whisper)

[[Paper]](https://arxiv.org/abs/2212.04356)

[[Model card]](https://github.com/openai/whisper/blob/main/model-card.md)

[[Colab example]](https://colab.research.google.com/github/openai/whisper/blob/master/notebooks/LibriSpeech.ipynb)

Whisper is a general-purpose speech recognition model. It is trained on a large dataset of diverse audio and is also a multitasking model that can perform multilingual speech recognition, speech translation, and language identification.

## Approach

A Transformer sequence-to-sequence model is trained on various speech processing tasks, including multilingual speech recognition, speech translation, spoken language identification, and voice activity detection. These tasks are jointly represented as a sequence of tokens to be predicted by the decoder, allowing a single model to replace many stages of a traditional speech-processing pipeline. The multitask training format uses a set of special tokens that serve as task specifiers or classification targets.

## Setup

We used Python 3.9.9 and [PyTorch](https://pytorch.org/) 1.10.1 to train and test our models, but the codebase is expected to be compatible with Python 3.8-3.11 and recent PyTorch versions. The codebase also depends on a few Python packages, most notably [OpenAI's tiktoken](https://github.com/openai/tiktoken) for their fast tokenizer implementation. You can download and install (or update to) the latest release of Whisper with the following command:

pip install -U openai-whisper

Alternatively, the following command will pull and install the latest commit from this repository, along with its Python dependencies:

pip install git+https://github.com/openai/whisper.git

To update the package to the latest version of this repository, please run:

pip install --upgrade --no-deps --force-reinstall git+https://github.com/openai/whisper.git

It also requires the command-line tool [`ffmpeg`](https://ffmpeg.org/) to be installed on your system, which is available from most package managers:

```bash

# on Ubuntu or Debian

sudo apt update && sudo apt install ffmpeg

# on Arch Linux

sudo pacman -S ffmpeg

# on MacOS using Homebrew (https://brew.sh/)

brew install ffmpeg

# on Windows using Chocolatey (https://chocolatey.org/)

choco install ffmpeg

# on Windows using Scoop (https://scoop.sh/)

scoop install ffmpeg

You may need [`rust`](http://rust-lang.org) installed as well, in case [tiktoken](https://github.com/openai/tiktoken) does not provide a pre-built wheel for your platform. If you see installation errors during the `pip install` command above, please follow the [Getting started page](https://www.rust-lang.org/learn/get-started) to install Rust development environment. Additionally, you may need to configure the `PATH` environment variable, e.g. `export PATH="$HOME/.cargo/bin:$PATH"`. If the installation fails with `No module named 'setuptools_rust'`, you need to install `setuptools_rust`, e.g. by running:

```bash

pip install setuptools-rust

## Available models and languages

There are six model sizes, four with English-only versions, offering speed and accuracy tradeoffs.

Below are the names of the available models and their approximate memory requirements and inference speed relative to the large model.

The relative speeds below are measured by transcribing English speech on a A100, and the real-world speed may vary significantly depending on many factors including the language, the speaking speed, and the available hardware.

| Size | Parameters | English-only model | Multilingual model | Required VRAM | Relative speed |

|:------:|:----------:|:------------------:|:------------------:|:-------------:|:--------------:|

| tiny | 39 M | `tiny.en` | `tiny` | ~1 GB | ~10x |

| base | 74 M | `base.en` | `base` | ~1 GB | ~7x |

| small | 244 M | `small.en` | `small` | ~2 GB | ~4x |

| medium | 769 M | `medium.en` | `medium` | ~5 GB | ~2x |

| large | 1550 M | N/A | `large` | ~10 GB | 1x |

| turbo | 809 M | N/A | `turbo` | ~6 GB | ~8x |

The `.en` models for English-only applications tend to perform better, especially for the `tiny.en` and `base.en` models. We observed that the difference becomes less significant for the `small.en` and `medium.en` models.

Additionally, the `turbo` model is an optimized version of `large-v3` that offers faster transcription speed with a minimal degradation in accuracy.

Whisper's performance varies widely depending on the language. The figure below shows a performance breakdown of `large-v3` and `large-v2` models by language, using WERs (word error rates) or CER (character error rates, shown in *Italic*) evaluated on the Common Voice 15 and Fleurs datasets. Additional WER/CER metrics corresponding to the other models and datasets can be found in Appendix D.1, D.2, and D.4 of [the paper](https://arxiv.org/abs/2212.04356), as well as the BLEU (Bilingual Evaluation Understudy) scores for translation in Appendix D.3.

## Command-line usage

The following command will transcribe speech in audio files, using the `turbo` model:

whisper audio.flac audio.mp3 audio.wav --model turbo

The default setting (which selects the `small` model) works well for transcribing English. To transcribe an audio file containing non-English speech, you can specify the language using the `--language` option:

whisper japanese.wav --language Japanese

Adding `--task translate` will translate the speech into English:

whisper japanese.wav --language Japanese --task translate

Run the following to view all available options:

whisper --help

See [tokenizer.py](https://github.com/openai/whisper/blob/main/whisper/tokenizer.py) for the list of all available languages.

## Python usage

Transcription can also be performed within Python:

```python

import whisper

model = whisper.load_model("turbo")

result = model.transcribe("audio.mp3")

print(result["text"])

Internally, the `transcribe()` method reads the entire file and processes the audio with a sliding 30-second window, performing autoregressive sequence-to-sequence predictions on each window.

Below is an example usage of `whisper.detect_language()` and `whisper.decode()` which provide lower-level access to the model.

```python

import whisper

model = whisper.load_model("turbo")

# load audio and pad/trim it to fit 30 seconds

audio = whisper.load_audio("audio.mp3")

audio = whisper.pad_or_trim(audio)

# make log-Mel spectrogram and move to the same device as the model

mel = whisper.log_mel_spectrogram(audio).to(model.device)

# detect the spoken language

_, probs = model.detect_language(mel)

print(f"Detected language: {max(probs, key=probs.get)}")

# decode the audio

options = whisper.DecodingOptions()

result = whisper.decode(model, mel, options)

# print the recognized text

print(result.text)

## More examples

Please use the [

Show and tell](https://github.com/openai/whisper/discussions/categories/show-and-tell) category in Discussions for sharing more example usages of Whisper and third-party extensions such as web demos, integrations with other tools, ports for different platforms, etc.

## License

Whisper's code and model weights are released under the MIT License. See [LICENSE](https://github.com/openai/whisper/blob/main/LICENSE) for further details.

././@PaxHeader

0000000

0000000

0000000

00000000034

00000000000

011452

0000000

0000000

28 mtime=1727720481.7617333

openai-whisper-20240930/openai_whisper.egg-info/

0000755

0001751

0000177

00000000000

00000000000

022225

00runner

docker

0000000

0000000

././@PaxHeader

0000000

0000000

0000000

00000000026

00000000000

011453

0000000

0000000

22 mtime=1727720481.0

openai-whisper-20240930/openai_whisper.egg-info/PKG-INFO

0000644

0001751

0000177

00000022163

00000000000

023326

00runner

docker

0000000

0000000

Metadata-Version: 2.1

Name: openai-whisper

Version: 20240930

Summary: Robust Speech Recognition via Large-Scale Weak Supervision

Home-page: https://github.com/openai/whisper

Author: OpenAI

License: MIT

Description: # Whisper

[[Blog]](https://openai.com/blog/whisper)

[[Paper]](https://arxiv.org/abs/2212.04356)

[[Model card]](https://github.com/openai/whisper/blob/main/model-card.md)

[[Colab example]](https://colab.research.google.com/github/openai/whisper/blob/master/notebooks/LibriSpeech.ipynb)

Whisper is a general-purpose speech recognition model. It is trained on a large dataset of diverse audio and is also a multitasking model that can perform multilingual speech recognition, speech translation, and language identification.

## Approach

A Transformer sequence-to-sequence model is trained on various speech processing tasks, including multilingual speech recognition, speech translation, spoken language identification, and voice activity detection. These tasks are jointly represented as a sequence of tokens to be predicted by the decoder, allowing a single model to replace many stages of a traditional speech-processing pipeline. The multitask training format uses a set of special tokens that serve as task specifiers or classification targets.

## Setup

We used Python 3.9.9 and [PyTorch](https://pytorch.org/) 1.10.1 to train and test our models, but the codebase is expected to be compatible with Python 3.8-3.11 and recent PyTorch versions. The codebase also depends on a few Python packages, most notably [OpenAI's tiktoken](https://github.com/openai/tiktoken) for their fast tokenizer implementation. You can download and install (or update to) the latest release of Whisper with the following command:

pip install -U openai-whisper

Alternatively, the following command will pull and install the latest commit from this repository, along with its Python dependencies:

pip install git+https://github.com/openai/whisper.git

To update the package to the latest version of this repository, please run:

pip install --upgrade --no-deps --force-reinstall git+https://github.com/openai/whisper.git

It also requires the command-line tool [`ffmpeg`](https://ffmpeg.org/) to be installed on your system, which is available from most package managers:

```bash

# on Ubuntu or Debian

sudo apt update && sudo apt install ffmpeg

# on Arch Linux

sudo pacman -S ffmpeg

# on MacOS using Homebrew (https://brew.sh/)

brew install ffmpeg

# on Windows using Chocolatey (https://chocolatey.org/)

choco install ffmpeg

# on Windows using Scoop (https://scoop.sh/)

scoop install ffmpeg

```

You may need [`rust`](http://rust-lang.org) installed as well, in case [tiktoken](https://github.com/openai/tiktoken) does not provide a pre-built wheel for your platform. If you see installation errors during the `pip install` command above, please follow the [Getting started page](https://www.rust-lang.org/learn/get-started) to install Rust development environment. Additionally, you may need to configure the `PATH` environment variable, e.g. `export PATH="$HOME/.cargo/bin:$PATH"`. If the installation fails with `No module named 'setuptools_rust'`, you need to install `setuptools_rust`, e.g. by running:

```bash

pip install setuptools-rust

```

## Available models and languages

There are six model sizes, four with English-only versions, offering speed and accuracy tradeoffs.

Below are the names of the available models and their approximate memory requirements and inference speed relative to the large model.

The relative speeds below are measured by transcribing English speech on a A100, and the real-world speed may vary significantly depending on many factors including the language, the speaking speed, and the available hardware.

| Size | Parameters | English-only model | Multilingual model | Required VRAM | Relative speed |

|:------:|:----------:|:------------------:|:------------------:|:-------------:|:--------------:|

| tiny | 39 M | `tiny.en` | `tiny` | ~1 GB | ~10x |

| base | 74 M | `base.en` | `base` | ~1 GB | ~7x |

| small | 244 M | `small.en` | `small` | ~2 GB | ~4x |

| medium | 769 M | `medium.en` | `medium` | ~5 GB | ~2x |

| large | 1550 M | N/A | `large` | ~10 GB | 1x |

| turbo | 809 M | N/A | `turbo` | ~6 GB | ~8x |

The `.en` models for English-only applications tend to perform better, especially for the `tiny.en` and `base.en` models. We observed that the difference becomes less significant for the `small.en` and `medium.en` models.

Additionally, the `turbo` model is an optimized version of `large-v3` that offers faster transcription speed with a minimal degradation in accuracy.

Whisper's performance varies widely depending on the language. The figure below shows a performance breakdown of `large-v3` and `large-v2` models by language, using WERs (word error rates) or CER (character error rates, shown in *Italic*) evaluated on the Common Voice 15 and Fleurs datasets. Additional WER/CER metrics corresponding to the other models and datasets can be found in Appendix D.1, D.2, and D.4 of [the paper](https://arxiv.org/abs/2212.04356), as well as the BLEU (Bilingual Evaluation Understudy) scores for translation in Appendix D.3.

## Command-line usage

The following command will transcribe speech in audio files, using the `turbo` model:

whisper audio.flac audio.mp3 audio.wav --model turbo

The default setting (which selects the `small` model) works well for transcribing English. To transcribe an audio file containing non-English speech, you can specify the language using the `--language` option:

whisper japanese.wav --language Japanese

Adding `--task translate` will translate the speech into English:

whisper japanese.wav --language Japanese --task translate

Run the following to view all available options:

whisper --help

See [tokenizer.py](https://github.com/openai/whisper/blob/main/whisper/tokenizer.py) for the list of all available languages.

## Python usage

Transcription can also be performed within Python:

```python

import whisper

model = whisper.load_model("turbo")

result = model.transcribe("audio.mp3")

print(result["text"])

```

Internally, the `transcribe()` method reads the entire file and processes the audio with a sliding 30-second window, performing autoregressive sequence-to-sequence predictions on each window.

Below is an example usage of `whisper.detect_language()` and `whisper.decode()` which provide lower-level access to the model.

```python

import whisper

model = whisper.load_model("turbo")

# load audio and pad/trim it to fit 30 seconds

audio = whisper.load_audio("audio.mp3")

audio = whisper.pad_or_trim(audio)

# make log-Mel spectrogram and move to the same device as the model

mel = whisper.log_mel_spectrogram(audio).to(model.device)

# detect the spoken language

_, probs = model.detect_language(mel)

print(f"Detected language: {max(probs, key=probs.get)}")

# decode the audio

options = whisper.DecodingOptions()

result = whisper.decode(model, mel, options)

# print the recognized text

print(result.text)

```

## More examples

Please use the [

Show and tell](https://github.com/openai/whisper/discussions/categories/show-and-tell) category in Discussions for sharing more example usages of Whisper and third-party extensions such as web demos, integrations with other tools, ports for different platforms, etc.

## License

Whisper's code and model weights are released under the MIT License. See [LICENSE](https://github.com/openai/whisper/blob/main/LICENSE) for further details.

Platform: UNKNOWN

Requires-Python: >=3.8

Description-Content-Type: text/markdown

Provides-Extra: dev

././@PaxHeader

0000000

0000000

0000000

00000000026

00000000000

011453

0000000

0000000

22 mtime=1727720481.0

openai-whisper-20240930/openai_whisper.egg-info/SOURCES.txt

0000644

0001751

0000177

00000001337

00000000000

024115

00runner

docker

0000000

0000000

LICENSE

MANIFEST.in

README.md

pyproject.toml

requirements.txt

setup.py

openai_whisper.egg-info/PKG-INFO

openai_whisper.egg-info/SOURCES.txt

openai_whisper.egg-info/dependency_links.txt

openai_whisper.egg-info/entry_points.txt

openai_whisper.egg-info/requires.txt

openai_whisper.egg-info/top_level.txt

whisper/__init__.py

whisper/__main__.py

whisper/audio.py

whisper/decoding.py

whisper/model.py

whisper/timing.py

whisper/tokenizer.py

whisper/transcribe.py

whisper/triton_ops.py

whisper/utils.py

whisper/version.py

whisper/assets/gpt2.tiktoken

whisper/assets/mel_filters.npz

whisper/assets/multilingual.tiktoken

whisper/normalizers/__init__.py

whisper/normalizers/basic.py

whisper/normalizers/english.json

whisper/normalizers/english.py

././@PaxHeader

0000000

0000000

0000000

00000000026

00000000000

011453

0000000

0000000

22 mtime=1727720481.0

openai-whisper-20240930/openai_whisper.egg-info/dependency_links.txt

0000644

0001751

0000177

00000000001

00000000000

026273

00runner

docker

0000000

0000000

././@PaxHeader

0000000

0000000

0000000

00000000026

00000000000

011453

0000000

0000000

22 mtime=1727720481.0

openai-whisper-20240930/openai_whisper.egg-info/entry_points.txt

0000644

0001751

0000177

00000000064

00000000000

025523

00runner

docker

0000000

0000000

[console_scripts]

whisper = whisper.transcribe:cli

././@PaxHeader

0000000

0000000

0000000

00000000026

00000000000

011453

0000000

0000000

22 mtime=1727720481.0

openai-whisper-20240930/openai_whisper.egg-info/requires.txt

0000644

0001751

0000177

00000000275

00000000000

024631

00runner

docker

0000000

0000000

more-itertools

tiktoken

[:platform_machine == "x86_64" and sys_platform == "linux" or sys_platform == "linux2"]

triton>=2.0.0

pytest

flake8

././@PaxHeader

0000000

0000000

0000000

00000000026

00000000000

011453

0000000

0000000

22 mtime=1727720481.0

openai-whisper-20240930/openai_whisper.egg-info/top_level.txt

0000644

0001751

0000177

00000000010

00000000000

024746

00runner

docker

0000000

0000000

whisper

././@PaxHeader

0000000

0000000

0000000

00000000026

00000000000

011453

0000000

0000000

22 mtime=1727720469.0

openai-whisper-20240930/pyproject.toml

0000644

0001751

0000177

00000000163

00000000000

020433

00runner

docker

0000000

0000000

[tool.black]

[tool.isort]

profile = "black"

include_trailing_comma = true

line_length = 88

multi_line_output = 3

././@PaxHeader

0000000

0000000

0000000

00000000026

00000000000

011453

0000000

0000000

22 mtime=1727720469.0

openai-whisper-20240930/requirements.txt

0000644

0001751

0000177

00000000214

00000000000

021000

00runner

docker

0000000

0000000

more-itertools

tiktoken

triton>=2.0.0;platform_machine=="x86_64" and sys_platform=="linux" or sys_platform=="linux2"

././@PaxHeader

0000000

0000000

0000000

00000000034

00000000000

011452

0000000

0000000

28 mtime=1727720481.7657332

openai-whisper-20240930/setup.cfg

0000644

0001751

0000177

00000000046

00000000000

017340

00runner

docker

0000000

0000000

[egg_info]

tag_build =

tag_date = 0

././@PaxHeader

0000000

0000000

0000000

00000000026

00000000000

011453

0000000

0000000

22 mtime=1727720469.0

openai-whisper-20240930/setup.py

0000644

0001751

0000177

00000002335

00000000000

017234

00runner

docker

0000000

0000000

import platform

import sys

from pathlib import Path

import pkg_resources

from setuptools import find_packages, setup

def read_version(fname="whisper/version.py"):

exec(compile(open(fname, encoding="utf-8").read(), fname, "exec"))

return locals()["__version__"]

requirements = []

if sys.platform.startswith("linux") and platform.machine() == "x86_64":

requirements.append("triton>=2.0.0")

setup(

name="openai-whisper",

py_modules=["whisper"],

version=read_version(),

description="Robust Speech Recognition via Large-Scale Weak Supervision",

long_description=open("README.md", encoding="utf-8").read(),

long_description_content_type="text/markdown",

readme="README.md",

python_requires=">=3.8",

author="OpenAI",

url="https://github.com/openai/whisper",

license="MIT",

packages=find_packages(exclude=["tests*"]),

install_requires=[

str(r)

for r in pkg_resources.parse_requirements(

Path(__file__).with_name("requirements.txt").open()

)

],

entry_points={

"console_scripts": ["whisper=whisper.transcribe:cli"],

},

include_package_data=True,

extras_require={"dev": ["pytest", "scipy", "black", "flake8", "isort"]},

././@PaxHeader

0000000

0000000

0000000

00000000034

00000000000

011452

0000000

0000000

28 mtime=1727720481.7627332

openai-whisper-20240930/whisper/

0000755

0001751

0000177

00000000000

00000000000

017200

00runner

docker

0000000

0000000

././@PaxHeader

0000000

0000000

0000000

00000000026

00000000000

011453

0000000

0000000

22 mtime=1727720469.0

openai-whisper-20240930/whisper/__init__.py

0000644

0001751

0000177

00000016257

00000000000

021324

00runner

docker

0000000

0000000

import hashlib

import io

import os

import urllib

import warnings

from typing import List, Optional, Union

import torch

from tqdm import tqdm

from .audio import load_audio, log_mel_spectrogram, pad_or_trim

from .decoding import DecodingOptions, DecodingResult, decode, detect_language

from .model import ModelDimensions, Whisper

from .transcribe import transcribe

from .version import __version__

_MODELS = {

"tiny.en": "https://openaipublic.azureedge.net/main/whisper/models/d3dd57d32accea0b295c96e26691aa14d8822fac7d9d27d5dc00b4ca2826dd03/tiny.en.pt",

"tiny": "https://openaipublic.azureedge.net/main/whisper/models/65147644a518d12f04e32d6f3b26facc3f8dd46e5390956a9424a650c0ce22b9/tiny.pt",

"base.en": "https://openaipublic.azureedge.net/main/whisper/models/25a8566e1d0c1e2231d1c762132cd20e0f96a85d16145c3a00adf5d1ac670ead/base.en.pt",

"base": "https://openaipublic.azureedge.net/main/whisper/models/ed3a0b6b1c0edf879ad9b11b1af5a0e6ab5db9205f891f668f8b0e6c6326e34e/base.pt",

"small.en": "https://openaipublic.azureedge.net/main/whisper/models/f953ad0fd29cacd07d5a9eda5624af0f6bcf2258be67c92b79389873d91e0872/small.en.pt",

"small": "https://openaipublic.azureedge.net/main/whisper/models/9ecf779972d90ba49c06d968637d720dd632c55bbf19d441fb42bf17a411e794/small.pt",

"medium.en": "https://openaipublic.azureedge.net/main/whisper/models/d7440d1dc186f76616474e0ff0b3b6b879abc9d1a4926b7adfa41db2d497ab4f/medium.en.pt",

"medium": "https://openaipublic.azureedge.net/main/whisper/models/345ae4da62f9b3d59415adc60127b97c714f32e89e936602e85993674d08dcb1/medium.pt",

"large-v1": "https://openaipublic.azureedge.net/main/whisper/models/e4b87e7e0bf463eb8e6956e646f1e277e901512310def2c24bf0e11bd3c28e9a/large-v1.pt",

"large-v2": "https://openaipublic.azureedge.net/main/whisper/models/81f7c96c852ee8fc832187b0132e569d6c3065a3252ed18e56effd0b6a73e524/large-v2.pt",

"large-v3": "https://openaipublic.azureedge.net/main/whisper/models/e5b1a55b89c1367dacf97e3e19bfd829a01529dbfdeefa8caeb59b3f1b81dadb/large-v3.pt",

"large": "https://openaipublic.azureedge.net/main/whisper/models/e5b1a55b89c1367dacf97e3e19bfd829a01529dbfdeefa8caeb59b3f1b81dadb/large-v3.pt",

"large-v3-turbo": "https://openaipublic.azureedge.net/main/whisper/models/aff26ae408abcba5fbf8813c21e62b0941638c5f6eebfb145be0c9839262a19a/large-v3-turbo.pt",

"turbo": "https://openaipublic.azureedge.net/main/whisper/models/aff26ae408abcba5fbf8813c21e62b0941638c5f6eebfb145be0c9839262a19a/large-v3-turbo.pt",

# base85-encoded (n_layers, n_heads) boolean arrays indicating the cross-attention heads that are

# highly correlated to the word-level timing, i.e. the alignment between audio and text tokens.

_ALIGNMENT_HEADS = {

"tiny.en": b"ABzY8J1N>@0{>%R00Bk>$p{7v037`oCl~+#00",

"tiny": b"ABzY8bu8Lr0{>%RKn9Fp%m@SkK7Kt=7ytkO",

"base.en": b"ABzY8;40c<0{>%RzzG;p*o+Vo09|#PsxSZm00",

"base": b"ABzY8KQ!870{>%RzyTQH3`Q^yNP!>##QT-<FaQ7m",

"small.en": b"ABzY8>?_)10{>%RpeA61k&I|OI3I$65C{;;pbCHh0B{qLQ;+}v00",

"small": b"ABzY8DmU6=0{>%Rpa?J`kvJ6qF(V^F86#Xh7JUGMK}P<N0000",

"medium.en": b"ABzY8usPae0{>%R7<zz_OvQ{)4kMa0BMw6u5rT}kRKX;$NfYBv00*Hl@qhsU00",

"medium": b"ABzY8B0Jh+0{>%R7}kK1fFL7w6%<-Pf*t^=N)Qr&0RR9",

"large-v1": b"ABzY8r9j$a0{>%R7#4sLmoOs{s)o3~84-RPdcFk!JR<kSfC2yj",

"large-v2": b"ABzY8zd+h!0{>%R7=D0pU<_bnWW*tkYAhobTNnu$jnkEkXqp)j;w1Tzk)UH3X%SZd&fFZ2fC2yj",

"large-v3": b"ABzY8gWO1E0{>%R7(9S+Kn!D~%ngiGaR?*L!iJG9p-nab0JQ=-{D1-g00",

"large": b"ABzY8gWO1E0{>%R7(9S+Kn!D~%ngiGaR?*L!iJG9p-nab0JQ=-{D1-g00",

"large-v3-turbo": b"ABzY8j^C+e0{>%RARaKHP%t(lGR*)0g!tONPyhe`",

"turbo": b"ABzY8j^C+e0{>%RARaKHP%t(lGR*)0g!tONPyhe`",

def _download(url: str, root: str, in_memory: bool) -> Union[bytes, str]:

os.makedirs(root, exist_ok=True)

expected_sha256 = url.split("/")[-2]

download_target = os.path.join(root, os.path.basename(url))

if os.path.exists(download_target) and not os.path.isfile(download_target):

raise RuntimeError(f"{download_target} exists and is not a regular file")

if os.path.isfile(download_target):

with open(download_target, "rb") as f:

model_bytes = f.read()

if hashlib.sha256(model_bytes).hexdigest() == expected_sha256:

return model_bytes if in_memory else download_target

else:

warnings.warn(

f"{download_target} exists, but the SHA256 checksum does not match; re-downloading the file"

)

with urllib.request.urlopen(url) as source, open(download_target, "wb") as output:

with tqdm(

total=int(source.info().get("Content-Length")),

ncols=80,

unit="iB",

unit_scale=True,

unit_divisor=1024,

) as loop:

while True:

buffer = source.read(8192)

if not buffer:

break

output.write(buffer)

loop.update(len(buffer))

model_bytes = open(download_target, "rb").read()

if hashlib.sha256(model_bytes).hexdigest() != expected_sha256:

raise RuntimeError(

"Model has been downloaded but the SHA256 checksum does not not match. Please retry loading the model."

)

return model_bytes if in_memory else download_target

def available_models() -> List[str]:

"""Returns the names of available models"""

return list(_MODELS.keys())

def load_model(

name: str,

device: Optional[Union[str, torch.device]] = None,

download_root: str = None,

in_memory: bool = False,

) -> Whisper:

"""

Load a Whisper ASR model

Parameters

----------

name : str

one of the official model names listed by `whisper.available_models()`, or

path to a model checkpoint containing the model dimensions and the model state_dict.

device : Union[str, torch.device]

the PyTorch device to put the model into

download_root: str

path to download the model files; by default, it uses "~/.cache/whisper"

in_memory: bool

whether to preload the model weights into host memory

Returns

-------

model : Whisper

The Whisper ASR model instance

"""

if device is None:

device = "cuda" if torch.cuda.is_available() else "cpu"

if download_root is None:

default = os.path.join(os.path.expanduser("~"), ".cache")

download_root = os.path.join(os.getenv("XDG_CACHE_HOME", default), "whisper")

if name in _MODELS:

checkpoint_file = _download(_MODELS[name], download_root, in_memory)

alignment_heads = _ALIGNMENT_HEADS[name]

elif os.path.isfile(name):

checkpoint_file = open(name, "rb").read() if in_memory else name

alignment_heads = None

else:

raise RuntimeError(

f"Model {name} not found; available models = {available_models()}"

)

with (

io.BytesIO(checkpoint_file) if in_memory else open(checkpoint_file, "rb")

) as fp:

checkpoint = torch.load(fp, map_location=device)

del checkpoint_file

dims = ModelDimensions(**checkpoint["dims"])

model = Whisper(dims)

model.load_state_dict(checkpoint["model_state_dict"])

if alignment_heads is not None:

model.set_alignment_heads(alignment_heads)

return model.to(device)

././@PaxHeader

0000000

0000000

0000000

00000000026

00000000000

011453

0000000

0000000

22 mtime=1727720469.0

openai-whisper-20240930/whisper/__main__.py

0000644

0001751

0000177

00000000043

00000000000

021267

00runner

docker

0000000

0000000

from .transcribe import cli

././@PaxHeader

0000000

0000000

0000000

00000000034

00000000000

011452

0000000

0000000

28 mtime=1727720481.7637334

openai-whisper-20240930/whisper/assets/

0000755

0001751

0000177

00000000000

00000000000

020502

00runner

docker

0000000

0000000

././@PaxHeader

0000000

0000000

0000000

00000000026

00000000000

011453

0000000

0000000

22 mtime=1727720469.0

openai-whisper-20240930/whisper/assets/gpt2.tiktoken

0000644

0001751

0000177

00003137742

00000000000

023151

00runner

docker

0000000

0000000

IQ== 0

Ig== 1

Iw== 2

JA== 3

JQ== 4

Jg== 5

Jw== 6

KA== 7

KQ== 8

Kg== 9

Kw== 10

LA== 11

LQ== 12

Lg== 13

Lw== 14

MA== 15

MQ== 16

Mg== 17

Mw== 18

NA== 19

NQ== 20

Ng== 21

Nw== 22

OA== 23

OQ== 24

Og== 25

Ow== 26

PA== 27

PQ== 28

Pg== 29

Pw== 30

QA== 31

QQ== 32

Qg== 33

Qw== 34

RA== 35

RQ== 36

Rg== 37

Rw== 38

SA== 39

SQ== 40

Sg== 41

Sw== 42

TA== 43

TQ== 44

Tg== 45

Tw== 46

UA== 47

UQ== 48

Ug== 49

Uw== 50

VA== 51

VQ== 52

Vg== 53

Vw== 54

WA== 55

WQ== 56

Wg== 57

Ww== 58

XA== 59

XQ== 60

Xg== 61

Xw== 62

YA== 63

YQ== 64

Yg== 65

Yw== 66

ZA== 67

ZQ== 68

Zg== 69

Zw== 70

aA== 71

aQ== 72

ag== 73

aw== 74

bA== 75

bQ== 76

bg== 77

bw== 78

cA== 79

cQ== 80

cg== 81

cw== 82

dA== 83

dQ== 84

dg== 85

dw== 86

eA== 87

eQ== 88

eg== 89

ew== 90

fA== 91

fQ== 92

fg== 93

oQ== 94

og== 95

ow== 96

pA== 97

pQ== 98

pg== 99

pw== 100

qA== 101

qQ== 102

qg== 103

qw== 104

rA== 105

rg== 106

rw== 107

sA== 108

sQ== 109

sg== 110

sw== 111

tA== 112

tQ== 113

tg== 114

tw== 115

uA== 116

uQ== 117

ug== 118

uw== 119

vA== 120

vQ== 121

vg== 122

vw== 123

wA== 124

wQ== 125

wg== 126

ww== 127

xA== 128

xQ== 129

xg== 130

xw== 131

yA== 132

yQ== 133

yg== 134

yw== 135

zA== 136

zQ== 137

zg== 138

zw== 139

0A== 140

0Q== 141

0g== 142

0w== 143

1A== 144

1Q== 145

1g== 146

1w== 147

2A== 148

2Q== 149

2g== 150

2w== 151

3A== 152

3Q== 153

3g== 154

3w== 155

4A== 156

4Q== 157

4g== 158

4w== 159

5A== 160

5Q== 161

5g== 162

5w== 163

6A== 164

6Q== 165

6g== 166

6w== 167

7A== 168

7Q== 169

7g== 170

7w== 171

8A== 172

8Q== 173

8g== 174

8w== 175

9A== 176

9Q== 177

9g== 178

9w== 179

+A== 180

+Q== 181

+g== 182

+w== 183

/A== 184

/Q== 185

/g== 186

/w== 187

AA== 188

AQ== 189

Ag== 190

Aw== 191

BA== 192

BQ== 193

Bg== 194

Bw== 195

CA== 196

CQ== 197

Cg== 198

Cw== 199

DA== 200

DQ== 201

Dg== 202

Dw== 203

EA== 204

EQ== 205

Eg== 206

Ew== 207

FA== 208

FQ== 209

Fg== 210

Fw== 211

GA== 212

GQ== 213

Gg== 214

Gw== 215

HA== 216

HQ== 217

Hg== 218

Hw== 219

IA== 220

fw== 221

gA== 222

gQ== 223

gg== 224

gw== 225

hA== 226

hQ== 227

hg== 228

hw== 229

iA== 230

iQ== 231

ig== 232

iw== 233

jA== 234

jQ== 235

jg== 236

jw== 237

kA== 238

kQ== 239

kg== 240

kw== 241

lA== 242

lQ== 243

lg== 244

lw== 245

mA== 246

mQ== 247

mg== 248

mw== 249

nA== 250

nQ== 251

ng== 252

nw== 253

oA== 254

rQ== 255

IHQ= 256

IGE= 257

aGU= 258

aW4= 259

cmU= 260

b24= 261

IHRoZQ== 262

ZXI= 263

IHM= 264

YXQ= 265

IHc= 266

IG8= 267

ZW4= 268

IGM= 269

aXQ= 270

aXM= 271

YW4= 272

b3I= 273

ZXM= 274

IGI= 275

ZWQ= 276

IGY= 277

aW5n 278

IHA= 279

b3U= 280

IGFu 281

YWw= 282

YXI= 283

IHRv 284

IG0= 285

IG9m 286

IGlu 287

IGQ= 288

IGg= 289

IGFuZA== 290

aWM= 291

YXM= 292

bGU= 293

IHRo 294

aW9u 295

b20= 296

bGw= 297

ZW50 298

IG4= 299

IGw= 300

c3Q= 301

IHJl 302

dmU= 303

IGU= 304

cm8= 305

bHk= 306

IGJl 307

IGc= 308

IFQ= 309

Y3Q= 310

IFM= 311

aWQ= 312

b3Q= 313

IEk= 314

dXQ= 315

ZXQ= 316

IEE= 317

IGlz 318

IG9u 319

aW0= 320

YW0= 321

b3c= 322

YXk= 323

YWQ= 324

c2U= 325

IHRoYXQ= 326

IEM= 327

aWc= 328

IGZvcg== 329

YWM= 330

IHk= 331

dmVy 332

dXI= 333

IHU= 334

bGQ= 335

IHN0 336

IE0= 337

J3M= 338

IGhl 339

IGl0 340

YXRpb24= 341

aXRo 342

aXI= 343

Y2U= 344

IHlvdQ== 345

aWw= 346

IEI= 347

IHdo 348

b2w= 349

IFA= 350

IHdpdGg= 351

IDE= 352

dGVy 353

Y2g= 354

IGFz 355

IHdl 356

ICg= 357

bmQ= 358

aWxs 359

IEQ= 360

aWY= 361

IDI= 362

YWc= 363

ZXJz 364

a2U= 365

ICI= 366

IEg= 367

ZW0= 368

IGNvbg== 369

IFc= 370

IFI= 371

aGVy 372

IHdhcw== 373

IHI= 374

b2Q= 375

IEY= 376

dWw= 377

YXRl 378

IGF0 379

cmk= 380

cHA= 381

b3Jl 382

IFRoZQ== 383

IHNl 384

dXM= 385

IHBybw== 386

IGhh 387

dW0= 388

IGFyZQ== 389

IGRl 390

YWlu 391

YW5k 392

IG9y 393

aWdo 394

ZXN0 395

aXN0 396

YWI= 397

cm9t 398

IE4= 399

dGg= 400

IGNvbQ== 401

IEc= 402

dW4= 403

b3A= 404

MDA= 405

IEw= 406

IG5vdA== 407

ZXNz 408

IGV4 409

IHY= 410

cmVz 411

IEU= 412

ZXc= 413

aXR5 414

YW50 415

IGJ5 416

ZWw= 417

b3M= 418

b3J0 419

b2M= 420

cXU= 421

IGZyb20= 422

IGhhdmU= 423

IHN1 424

aXZl 425

b3VsZA== 426

IHNo 427

IHRoaXM= 428

bnQ= 429

cmE= 430

cGU= 431

aWdodA== 432

YXJ0 433

bWVudA== 434

IGFs 435

dXN0 436

ZW5k 437

LS0= 438

YWxs 439

IE8= 440

YWNr 441

IGNo 442

IGxl 443

aWVz 444

cmVk 445

YXJk 446

4oA= 447

b3V0 448

IEo= 449

IGFi 450

ZWFy 451

aXY= 452

YWxseQ== 453

b3Vy 454

b3N0 455

Z2g= 456

cHQ= 457

IHBs 458

YXN0 459

IGNhbg== 460

YWs= 461

b21l 462

dWQ= 463

VGhl 464

IGhpcw== 465

IGRv 466

IGdv 467

IGhhcw== 468

Z2U= 469

J3Q= 470

IFU= 471

cm91 472

IHNh 473

IGo= 474

IGJ1dA== 475

IHdvcg== 476

IGFsbA== 477

ZWN0 478

IGs= 479

YW1l 480

IHdpbGw= 481

b2s= 482

IHdoZQ== 483

IHRoZXk= 484

aWRl 485

MDE= 486

ZmY= 487

aWNo 488

cGw= 489

dGhlcg== 490

IHRy 491

Li4= 492

IGludA== 493

aWU= 494

dXJl 495

YWdl 496

IG5l 497

aWFs 498

YXA= 499

aW5l 500

aWNl 501

IG1l 502

IG91dA== 503

YW5z 504

b25l 505

b25n 506

aW9ucw== 507

IHdobw== 508

IEs= 509

IHVw 510

IHRoZWly 511

IGFk 512

IDM= 513

IHVz 514

YXRlZA== 515

b3Vz 516

IG1vcmU= 517

dWU= 518

b2c= 519

IFN0 520

aW5k 521

aWtl 522

IHNv 523

aW1l 524

cGVy 525

LiI= 526

YmVy 527

aXo= 528

YWN0 529

IG9uZQ== 530

IHNhaWQ= 531

IC0= 532

YXJl 533

IHlvdXI= 534

Y2M= 535

IFRo 536

IGNs 537

ZXA= 538

YWtl 539

YWJsZQ== 540

aXA= 541

IGNvbnQ= 542

IHdoaWNo 543

aWE= 544

IGlt 545

IGFib3V0 546

IHdlcmU= 547

dmVyeQ== 548

dWI= 549

IGhhZA== 550

IGVu 551

IGNvbXA= 552

LCI= 553

IElu 554

IHVu 555

IGFn 556

aXJl 557

YWNl 558

YXU= 559

YXJ5 560

IHdvdWxk 561

YXNz 562

cnk= 563

IOKA 564

Y2w= 565

b29r 566

ZXJl 567

c28= 568

IFY= 569

aWdu 570

aWI= 571

IG9mZg== 572

IHRl 573

dmVu 574

IFk= 575

aWxl 576

b3Nl 577

aXRl 578

b3Jt 579

IDIwMQ== 580

IHJlcw== 581

IG1hbg== 582

IHBlcg== 583

IG90aGVy 584

b3Jk 585

dWx0 586

IGJlZW4= 587

IGxpa2U= 588

YXNl 589

YW5jZQ== 590

a3M= 591

YXlz 592

b3du 593

ZW5jZQ== 594

IGRpcw== 595

Y3Rpb24= 596

IGFueQ== 597

IGFwcA== 598

IHNw 599

aW50 600

cmVzcw== 601

YXRpb25z 602

YWls 603

IDQ= 604

aWNhbA== 605

IHRoZW0= 606

IGhlcg== 607

b3VudA== 608

IENo 609

IGFy 610

IGlm 611

IHRoZXJl 612

IHBl 613

IHllYXI= 614

YXY= 615

IG15 616

IHNvbWU= 617

IHdoZW4= 618

b3VnaA== 619

YWNo 620

IHRoYW4= 621

cnU= 622

b25k 623

aWNr 624

IG92ZXI= 625

dmVs 626

IHF1 627

Cgo= 628

IHNj 629

cmVhdA== 630

cmVl 631

IEl0 632

b3VuZA== 633

cG9ydA== 634

IGFsc28= 635

IHBhcnQ= 636

ZnRlcg== 637

IGtu 638

IGJlYw== 639

IHRpbWU= 640

ZW5z 641

IDU= 642

b3BsZQ== 643

IHdoYXQ= 644

IG5v 645

ZHU= 646

bWVy 647

YW5n 648

IG5ldw== 649

LS0tLQ== 650

IGdldA== 651

b3J5 652

aXRpb24= 653

aW5ncw== 654

IGp1c3Q= 655

IGludG8= 656

IDA= 657

ZW50cw== 658

b3Zl 659

dGU= 660

IHBlb3BsZQ== 661

IHByZQ== 662

IGl0cw== 663

IHJlYw== 664

IHR3 665

aWFu 666

aXJzdA== 667

YXJr 668

b3Jz 669

IHdvcms= 670

YWRl 671

b2I= 672

IHNoZQ== 673

IG91cg== 674

d24= 675

aW5r 676

bGlj 677

IDE5 678

IEhl 679

aXNo 680

bmRlcg== 681

YXVzZQ== 682

IGhpbQ== 683

b25z 684

IFs= 685

IHJv 686

Zm9ybQ== 687

aWxk 688

YXRlcw== 689

dmVycw== 690

IG9ubHk= 691

b2xs 692

IHNwZQ== 693

Y2s= 694

ZWxs 695

YW1w 696

IGFjYw== 697

IGJs 698

aW91cw== 699

dXJu 700

ZnQ= 701

b29k 702

IGhvdw== 703

aGVk 704

ICc= 705

IGFmdGVy 706

YXc= 707

IGF0dA== 708

b3Y= 709

bmU= 710

IHBsYXk= 711

ZXJ2 712

aWN0 713

IGNvdWxk 714

aXR0 715

IGFt 716

IGZpcnN0 717

IDY= 718

IGFjdA== 719

ICQ= 720

ZWM= 721

aGluZw== 722

dWFs 723

dWxs 724

IGNvbW0= 725

b3k= 726

b2xk 727

Y2Vz 728

YXRlcg== 729

IGZl 730

IGJldA== 731

d2U= 732

aWZm 733

IHR3bw== 734

b2Nr 735

IGJhY2s= 736

KS4= 737

aWRlbnQ= 738

IHVuZGVy 739

cm91Z2g= 740

c2Vs 741

eHQ= 742

IG1heQ== 743

cm91bmQ= 744

IHBv 745

cGg= 746

aXNz 747

IGRlcw== 748

IG1vc3Q= 749

IGRpZA== 750

IGFkZA== 751

amVjdA== 752

IGluYw== 753

Zm9yZQ== 754

IHBvbA== 755

b250 756

IGFnYWlu 757

Y2x1ZA== 758

dGVybg== 759

IGtub3c= 760

IG5lZWQ= 761

IGNvbnM= 762

IGNv 763

IC4= 764

IHdhbnQ= 765

IHNlZQ== 766

IDc= 767

bmluZw== 768

aWV3 769

IFRoaXM= 770

Y2Vk 771

IGV2ZW4= 772

IGluZA== 773

dHk= 774

IFdl 775

YXRo 776

IHRoZXNl 777

IHBy 778

IHVzZQ== 779

IGJlY2F1c2U= 780

IGZs 781

bmc= 782

IG5vdw== 783

IOKAkw== 784

Y29t 785

aXNl 786

IG1ha2U= 787

IHRoZW4= 788

b3dlcg== 789

IGV2ZXJ5 790

IFVu 791

IHNlYw== 792

b3Nz 793

dWNo 794

IGVt 795

ID0= 796

IFJl 797

aWVk 798

cml0 799

IGludg== 800

bGVjdA== 801

IHN1cHA= 802

YXRpbmc= 803

IGxvb2s= 804

bWFu 805

cGVjdA== 806

IDg= 807

cm93 808

IGJ1 809

IHdoZXJl 810

aWZpYw== 811

IHllYXJz 812

aWx5 813

IGRpZmY= 814

IHNob3VsZA== 815

IHJlbQ== 816

VGg= 817

SW4= 818

IGV2 819

ZGF5 820

J3Jl 821

cmli 822

IHJlbA== 823

c3M= 824

IGRlZg== 825

IHJpZ2h0 826

IHN5 827

KSw= 828

bGVz 829

MDAw 830

aGVu 831

IHRocm91Z2g= 832

IFRy 833

X18= 834

IHdheQ== 835

IGRvbg== 836

ICw= 837

IDEw 838

YXNlZA== 839

IGFzcw== 840

dWJsaWM= 841

IHJlZw== 842

IEFuZA== 843

aXg= 844

IHZlcnk= 845

IGluY2x1ZA== 846

b3RoZXI= 847

IGltcA== 848

b3Ro 849

IHN1Yg== 850

IOKAlA== 851

IGJlaW5n 852

YXJn 853

IFdo 854

PT0= 855

aWJsZQ== 856

IGRvZXM= 857

YW5nZQ== 858

cmFt 859

IDk= 860

ZXJ0 861

cHM= 862

aXRlZA== 863

YXRpb25hbA== 864

IGJy 865

IGRvd24= 866

IG1hbnk= 867

YWtpbmc= 868

IGNhbGw= 869

dXJpbmc= 870

aXRpZXM= 871

IHBo 872

aWNz 873

YWxz 874

IGRlYw== 875

YXRpdmU= 876

ZW5lcg== 877

IGJlZm9yZQ== 878

aWxpdHk= 879

IHdlbGw= 880

IG11Y2g= 881

ZXJzb24= 882

IHRob3Nl 883

IHN1Y2g= 884

IGtl 885

IGVuZA== 886

IEJ1dA== 887

YXNvbg== 888

dGluZw== 889

IGxvbmc= 890

ZWY= 891

IHRoaW5r 892

eXM= 893

IGJlbA== 894

IHNt 895

aXRz 896

YXg= 897

IG93bg== 898

IHByb3Y= 899

IHNldA== 900

aWZl 901

bWVudHM= 902

Ymxl 903

d2FyZA== 904

IHNob3c= 905

IHByZXM= 906

bXM= 907

b21ldA== 908

IG9i 909

IHNheQ== 910

IFNo 911

dHM= 912

ZnVs 913

IGVmZg== 914

IGd1 915

IGluc3Q= 916

dW5k 917

cmVu 918

Y2Vzcw== 919

IGVudA== 920

IFlvdQ== 921

IGdvb2Q= 922

IHN0YXJ0 923

aW5jZQ== 924

IG1hZGU= 925

dHQ= 926

c3RlbQ== 927

b2xvZw== 928

dXA= 929

IHw= 930

dW1w 931

IGhlbA== 932

dmVybg== 933

dWxhcg== 934

dWFsbHk= 935

IGFj 936

IG1vbg== 937

IGxhc3Q= 938

IDIwMA== 939

MTA= 940

IHN0dWQ= 941

dXJlcw== 942

IEFy 943

c2VsZg== 944

YXJz 945

bWVyaWM= 946

dWVz 947

Y3k= 948

IG1pbg== 949

b2xsb3c= 950

IGNvbA== 951